The Orientation Layer — Five players. One Pattern. The territory that remains open.

Share Via:

There is a moment in any major transition when the speed of change exceeds the speed of sense-making. Not the speed of innovation. That can always go faster. The speed at which people, institutions, and systems can orient to what is actually happening. We appear to be inside that moment now.

In the first six weeks of 2026, every major AI lab released frontier models within hours of each other. Anthropic expanded its surface area with Claude Code, Cowork (a desktop automation tool aimed at non-developers), and a growing plugin ecosystem, while donating MCP to the Linux Foundation and entering the political arena with $20 million toward AI regulation advocacy. OpenAI reported record adoption of Codex and launched Frontier, its enterprise orchestration platform. Google shipped Gemini 3 Deep Think. Microsoft, after opening the year with a measured tone around responsible deployment, found itself, like every other major player, competing in a race whose pace leaves little room for restraint.

None of this is criticism. The competitive dynamics are real. The stakes are real. The capital being deployed, over $600 billion in combined capex commitments from the hyperscalers alone, is building infrastructure that will shape how intelligence integrates with daily life for decades. The labs are solving hard problems and shipping real capabilities at remarkable speed.

What is worth examining is what happens around that speed. Not inside the labs, but in the broader landscape. In the markets, in the workforce, in the regulatory environment, in the lived experience of the people navigating all of this in real-time.

Because the pattern emerging across those layers is consistent. And it points to a gap.

What the Signals Show

The Markets

The financial markets are the closest thing modern civilization has to a real-time collective nervous system, and what they are expressing in early 2026 is revealing.

The S&P 500 has been consolidating in a range since the start of the year. Not crashing, not rallying. Oscillating. In the first week of February alone, a wave of AI-related sell-offs wiped billions from legacy software companies, an event traders began calling the “SaaSpocalypse.” Wealth management stocks dropped 7 to 8% in a single session after a fintech startup demonstrated agentic AI could automate complex tax strategy. Logistics firms, commercial real estate brokers, insurance companies, one sector after another repriced as investors attempted to model disruption they could feel but couldn’t quantify.

The market is not irrational here. It is doing what markets do: trying to price in a future it cannot yet see clearly. The significance is that the uncertainty is not limited to one sector or one technology. It is systemic. The market is moving between creation narrative and destruction narrative because both carry weight, and no framework has yet emerged to reconcile them.

Gold is approaching $5,000. The VIX is elevated. Earnings are strong, with S&P 500 companies reporting 12% growth in Q4, but the mood is cautious. The numbers say growth. The behavior says disorientation.

The Workforce

The workforce data tells a similar story in human terms.

AI usage among workers rose significantly in 2025. In the same period, worker confidence in the relevance of their own skills declined. Companies invested over a trillion dollars in AI infrastructure. More than half report no measurable return. Workplace AI adoption flatlined in Q4 2025, and when researchers at Gallup investigated why, the most common barrier was not complexity or resistance. It was that people could not see what these tools were actually for in their lives.

The industry has framed this as an adoption gap, a problem to solve with better onboarding, better interfaces, better use cases. That framing may be incomplete. What the data also supports is that people are sensing a shift in their relationship to their own capabilities that the tools themselves do not address. Confidence is dropping not because the tools are bad, but because the integration of those tools into work and life is happening faster than the frameworks for navigating that integration.

Alongside this, a parallel pattern is emerging. The rapid democratization of AI-powered development, sometimes called vibe coding, has produced a wave of new builders, new founders, new creators entering the market with unprecedented access to powerful tools. This is genuinely exciting. It is also structurally similar to earlier waves of democratized access, from day trading to the creator economy, where powerful infrastructure generated enormous activity without proportional distribution of outcomes. The tools are new. The pattern is familiar. And when the tool being democratized is cognition itself, the stakes of that pattern are worth watching carefully.

The Regulation Layer

Governments worldwide are responding to AI with significant legislative ambition.

The EU AI Act reaches full implementation in August 2026, establishing risk-based obligations for AI systems with enforcement carrying fines up to 7% of global turnover. The EU Data Act extends sovereignty requirements beyond personal data to industrial and IoT data. Eighteen US state privacy laws are now active, with enforcement accelerating. India, China, Vietnam, and thirty-five African nations are all advancing data sovereignty legislation. AWS has launched a physically separate European Sovereign Cloud, with €7.8 billion committed through 2040. IDC projects that by 2028, 60% of multinational firms will operate split AI stacks across sovereign zones, tripling integration costs.

The scale of regulatory activity reflects the seriousness with which governments are approaching this transition. It also reveals the difficulty of the task. In the US, federal and state-level approaches are in tension, with competing visions for how governance should be structured. At the WHO Executive Board last week, a substantive debate unfolded between nations that want harmonized AI standards and nations, particularly in the Global South, that want stronger protections against asymmetric benefit flows. These are not simple disagreements. They reflect genuine differences in how different parts of the world are experiencing the same technological transition.

What regulation surfaces, when read as signal: nation-states are navigating the same tension as individuals and enterprises. The demand for meaningful control over systems that are reshaping daily life. The recognition that deployment is outpacing governance. Regulation is not failing. It is working through a genuinely unprecedented challenge.

The Personal Layer

Beneath the institutional signals, there is a human experience that deserves direct acknowledgment.

The information environment has become extraordinarily noisy. Social media feeds increasingly blend human and AI-generated content in ways that are difficult to distinguish. The volume of advice, expertise, and opinion available on any topic has never been higher, and the signal-to-noise ratio has arguably never been lower. For many people, the challenge is no longer access to information. It is the ability to orient within it. To determine what matters. To maintain their own sense of direction in an environment optimized for engagement rather than coherence.

This is not a personal failing. It is a structural condition. When the systems people rely on for orientation, whether media, institutions, or economic structures, are themselves in flux, the cognitive load of navigating daily life increases in ways that are real and measurable.

And yet, something else is also happening. Tools and frameworks for personal sovereignty are emerging in parallel with the noise. Firefox has committed to making AI strictly opt-in, with centralized user controls. Local-first AI agents, including projects like goose and AnythingLLM, are building infrastructure for individuals to run AI on their own hardware. Self-sovereign identity frameworks are gaining traction. California’s DROP law, effective January 2026, gives consumers legal rights over personal data deletion. OpenClaw, which we documented in ILR-02, demonstrated at scale that when users are given genuine choice between managed convenience and sovereign infrastructure, a meaningful number choose sovereignty.

Both realities are true simultaneously. The noise and the emergence. The disorientation and the new tools for navigating it. The personal layer holds the full picture.

The Enterprise Layer

Organizations are navigating the same tension at institutional scale.

93% of executives surveyed say AI sovereignty must factor into business strategy. 95% plan to build their own AI platforms. Only 13% are on track to do it. Only 1% consider themselves mature in AI deployment. The hyperscalers, recognizing this demand, are now offering sovereignty as a feature, building separate infrastructure and compliance frameworks to meet enterprise requirements that did not exist three years ago.

The gap between strategic intention and operational readiness is significant. And it matters beyond the boardroom, because when the organizations people work within are uncertain about how to navigate this transition, that uncertainty shapes job security, career planning, and household economics in direct and personal ways.

The market behavior around enterprise AI reflects this. Sectors being repriced not on current fundamentals but on anticipated disruption. The repricing may prove prescient or premature. Either way, it signals that the enterprise landscape is in a period of reorientation that has not yet resolved.

The Convergence

Here is what becomes visible when the five layers are read together.

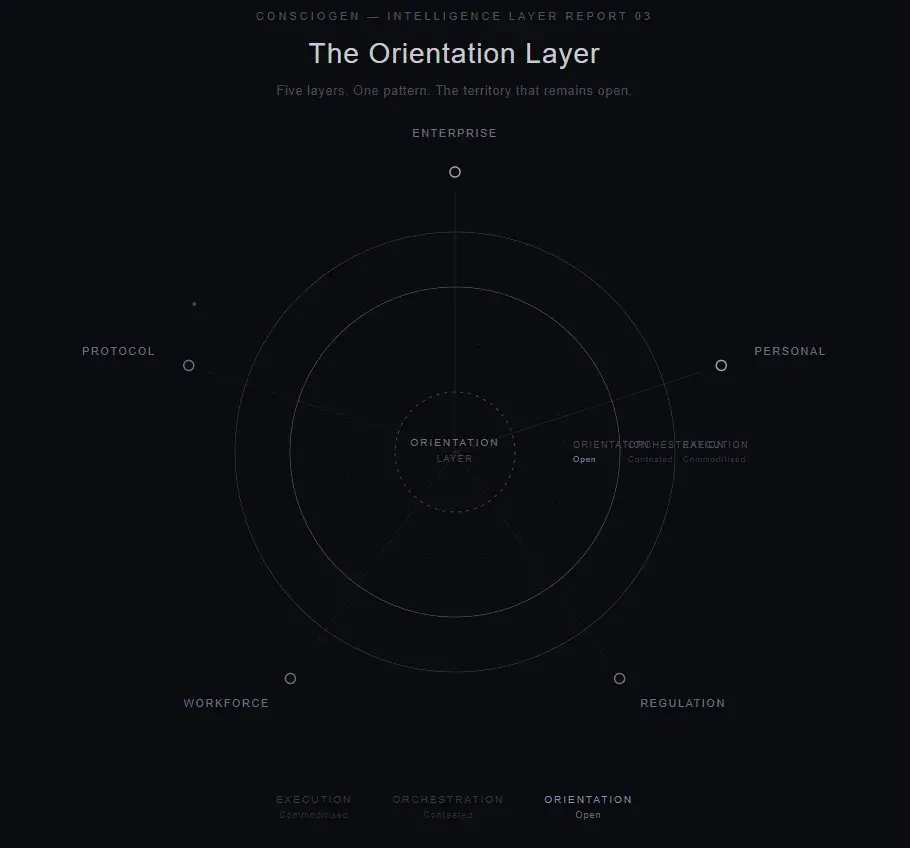

The protocol layer is being rebuilt at speed. The workforce is gaining tools while losing confidence. Governments are legislating with ambition and navigating genuine complexity. Individuals are experiencing unprecedented information density alongside emerging sovereignty tools. Enterprises are demanding capabilities they cannot yet build.

These are not five separate stories. They are one pattern expressing across every layer simultaneously: the speed of capability deployment has exceeded the speed of orientation.

The adoption gap the industry is working to close may be, in part, a signal worth listening to rather than a problem to solve. It may represent millions of people and thousands of institutions communicating something important: that engagement requires not just access to powerful tools, but the capacity to orient to what those tools mean in the context of a life, a career, an organization, a society.

Yuval Noah Harari has described this moment as the biggest psychological experiment in human history. Peter Diamandis has called it the greatest inflection point in 125 years. Sam Altman has framed it as the age of builders. Each of these framings captures something real. What none of them fully addresses is the question underneath:

What orients the human through the transition?

Not what tools they need. Not what regulations protect them. Not what platform to build on. What helps a person, or an organization, or a society, maintain coherence when every system they have relied on for orientation is itself in motion?

The Open Territory

ILR-01 asked whether humans could maintain agency through AI integration or whether cognitive capability would atrophy as tools became substrate. ILR-02 documented that when given genuine choice, users demonstrably choose sovereignty, and identified the execution layer commoditising while the orientation layer remained open.

ILR-03 finds both observations confirmed at broader scale.

The execution layer has commoditized. Models are converging. Technical differentiation between frontier labs is measured in weeks, sometimes days. This is why competitive energy is shifting from capability to positioning. Some Superbowl ads showed this in reality.

The orchestration layer is where the most visible competition is happening. OpenAI’s Codex and Frontier platform, Anthropic’s MCP ecosystem, Claude Code, and Cowork, Google’s agent frameworks. Each represents a serious effort to become the coordination infrastructure for how AI integrates with enterprise and individual workflows. This layer will absorb significant capital and attention over the coming months, and the work being done there is substantive.

The orientation layer remains largely unaddressed. This is the layer that asks: what should automation serve? How does a human maintain purposeful direction when execution capacity exceeds orientation capacity? What infrastructure supports not just the deployment of intelligence, but the coherent integration of it into a life worth living?

This is not a criticism of the work being done at other layers. Execution and orchestration are necessary. The observation is that a gap exists at the orientation layer, it is measurable across the signals documented in this report, and it represents territory where meaningful work remains to be done.

What Consciogen Is Building

Consciogen operates at the orientation layer. Not the layer that builds AI tools. Not the layer that orchestrates them. The layer that asks what automation should serve, and builds infrastructure to support that question.

We are developing sovereignty-first knowledge architecture designed for this territory. Systems that maintain coherence, provenance, and agency, built for the moment when execution is no longer the constraint and the question becomes not “how do I automate this?” but “what’s worth building toward?”

The orientation layer is open territory. The convergence documented in this report is creating demand for it. We are building there, and we welcome others who recognize the same gap.

The Through-Line

ILR-01 documented the architecture of dependency in a single announcement. ILR-02 documented the sovereignty signal in a single open-source release. ILR-03 documents the orientation layer across a civilizational landscape, and names the territory that remains open.

The question this report leaves is not prescriptive. It is observational, and it is sincere:

As the capacity to execute accelerates beyond the capacity to orient, who builds for the gap?

Consciogen publishes Intelligence Layer Reports to surface the signals that shape how intelligence integrates with human life, before the mainstream narrative catches up.

ILR 03 covers the period January 24 to February 12, 2026.

Share Via: